Convex Functions

Contents

9.8. Convex Functions#

Throughout this section, we assume that \(\VV, \WW\) are real vector spaces. Wherever necessary, they are equipped with a norm \(\| \cdot \|\) or an real inner product \(\langle \cdot, \cdot \rangle\). They are also equipped with a metric \(d(\bx, \by) = \| \bx - \by \|\) as needed.

We suggest the readers to review the notions of graph, epigraph, sublevel sets of real valued functions in Real Valued Functions. Also pay attention to the notion of extended real valued functions, their effective domains, graphs and level sets.

9.8.1. Convexity of a Function#

Definition 9.43 (Convex function)

Let \(\VV\) be a real vector space. A real valued function \(f: \VV \to \RR\) is convex if \(\dom f\) is a convex set and for all \(\bx_1,\bx_2 \in \dom f\), and \(t \in [0, 1]\), we have:

An extended valued function \(f : \VV \to \ERL\) is convex if \(\dom f\) is a convex set and for every \(\bx_1,\bx_2 \in \VV\), and \(t \in [0, 1]\), we have:

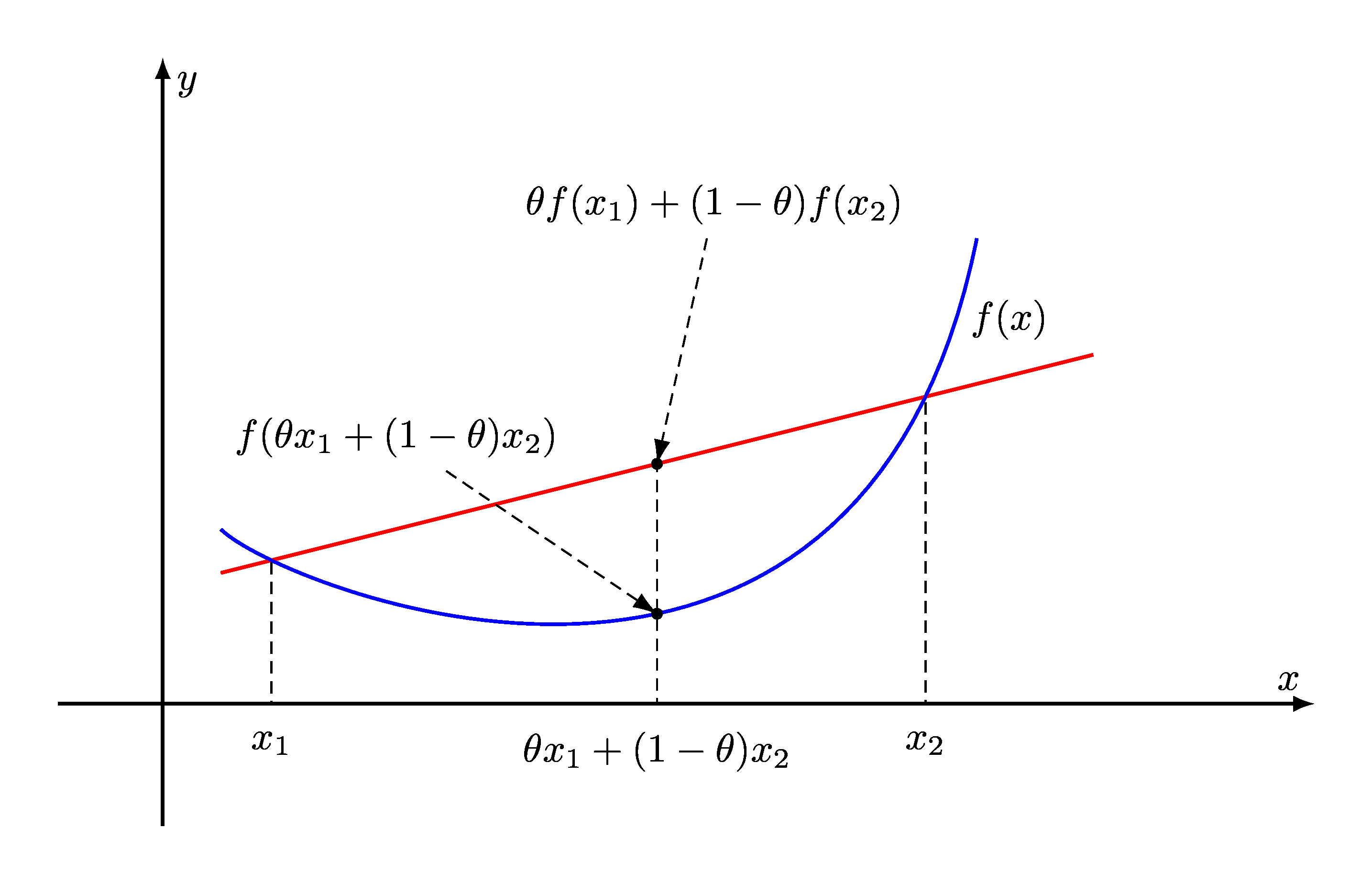

Fig. 9.3 Graph of a convex function. The line segment between any two points on the graph lies above the graph.#

For a convex function, every chord lies above the graph of the function.

9.8.1.1. Strictly Convex Functions#

Definition 9.44 (Strictly convex function)

Let \(\VV\) be a real vector space. A convex function \(f: \VV \to \RR\) is strictly convex if for all \(\bx_1,\bx_2 \in \dom f\), where \(\bx_1\) and \(\bx_2\) are distinct, and \(t \in (0, 1)\), we have:

In other words, the inequality is a strict inequality whenever the point \(\bx = t \bx_1 + (1-t) \bx_2\) is distinct from \(\bx_1\) and \(\bx_2\) both.

9.8.1.2. Concave Functions#

Definition 9.45 (Concave function)

We say that a function \(f\) is concave if \(-f\) is convex. A function \(f\) is strictly concave if \(-f\) is strictly convex.

Example 9.25 (Linear functional)

A linear functional on \(\VV\) in an inner product space has the form of an inner product parameterized by a vector \(\ba \in \VV\) as

i.e. the inner product of \(\bx\) with \(\ba\).

Theorem 9.67

All linear functionals on a real vector space are convex as well as concave.

Proof. For any \(\bx, \by \in \VV\) and \(t \in [0,1]\):

Thus, \(f_{\ba}\) is convex. We can also see that \(-f_{\ba}\) is convex too. Hence, \(f_{\ba}\) is concave too.

9.8.1.3. Arithmetic Mean#

Example 9.26 (Arithmetic mean is convex and concave)

Let \(f : \RR^n \to \RR\) be given by:

Arithmetic mean is a linear function. In fact

for all \(\alpha,\beta \in \RR\).

Thus, for \(t \in [0,1]\)

Thus, arithmetic mean is both convex and concave.

9.8.1.4. Affine Function#

Example 9.27 (Affine functional)

An affine functional is a special type of affine function which maps a vector from \(\VV\) to a scalar in the associate field \(\FF\).

On real vector spaces, an affine functional on \(\VV\) is defined in terms of a vector \(\ba \in \VV\) and a scalar \(b \in \RR\) as

i.e. the inner product of \(\bx\) with \(\ba\) followed by a translation by the amount of \(b\).

Theorem 9.68

All affine functionals on a real vector space are convex as well as concave.

Proof. A simple way to show this is to recall that for any affine function \(T\),

holds true for any \(\bx, \by \in \VV\) and \(t \in \FF\).

In the particular case of real affine functionals, for any \(\bx, \by \in \VV\) and \(t \in [0,1]\), the previous identity reduces to:

This establishes that \(f_{\ba, b}\) is both convex and concave.

Another way to prove this is by following the definition of \(f_{\ba, b}\) above:

9.8.1.5. Absolute Value#

Example 9.28 (Absolute value is convex)

Let \(f : \RR \to \RR\) be:

with \(\dom f = \RR\).

Recall that \(|x|\) is a norm on the real line \(\RR\). Thus, it satisfies the triangle inequality:

In particular, for any \(t \in [0,1]\)

Thus \(f\) satisfies the convexity defining inequality (9.1):

Hence, \(f\) is convex.

9.8.1.6. Norms#

Theorem 9.69 (All norms are convex)

Let \(\| \cdot \| : \VV \to \RR\) be a norm on a real vector space \(\VV\). Then, it satisfies the triangle inequality:

In particular, for any \(t \in [0,1]\)

Thus \(f\) satisfies the convexity defining inequality (9.1). Hence, \(f\) is convex.

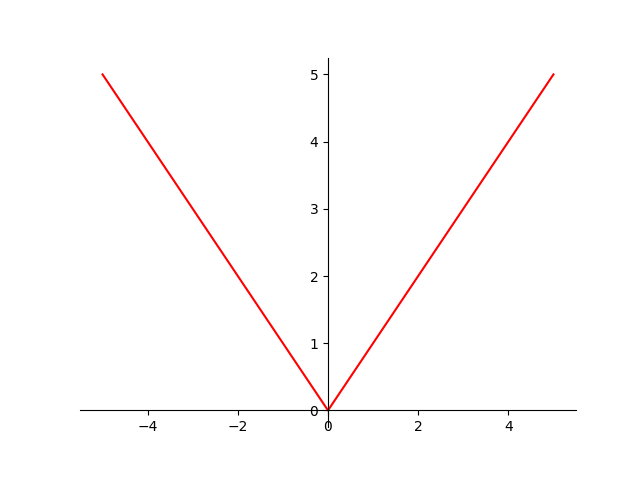

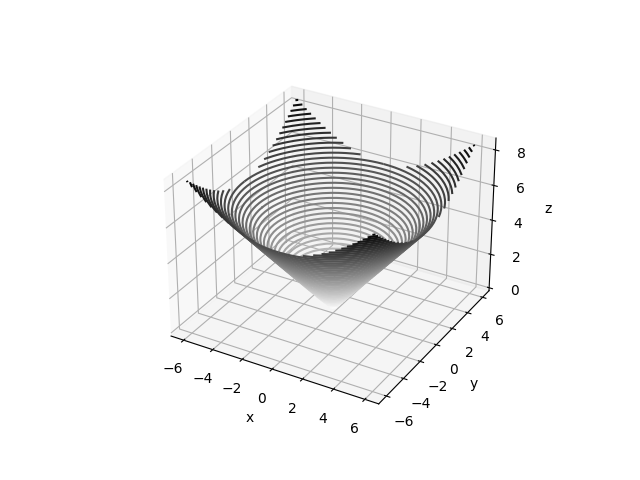

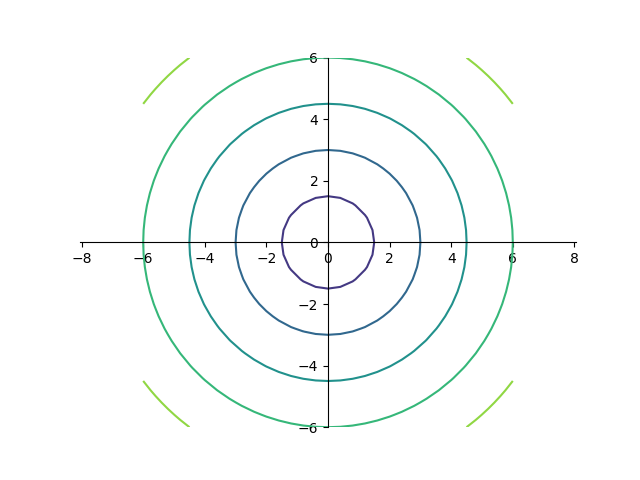

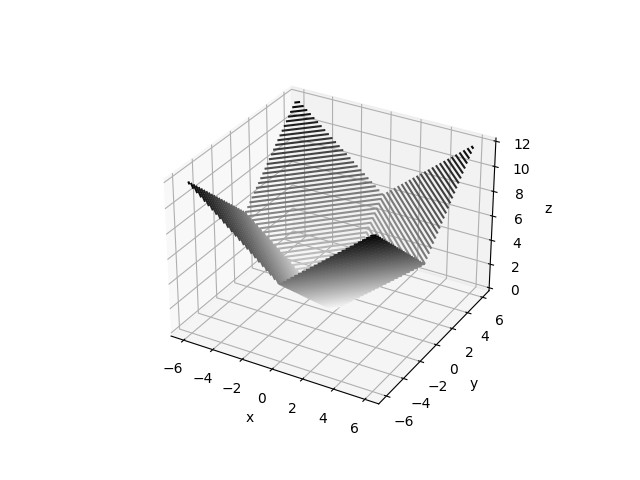

Fig. 9.4 \(\ell_2\) norm for \(\RR^2\). \(\| \cdot \|_2 : \RR^2 \to \RR\) 3D contour plots.#

Fig. 9.5 \(\ell_2\) norm for \(\RR^2\). \(\| \cdot \|_2 : \RR^2 \to \RR\) 2D contour plots.#

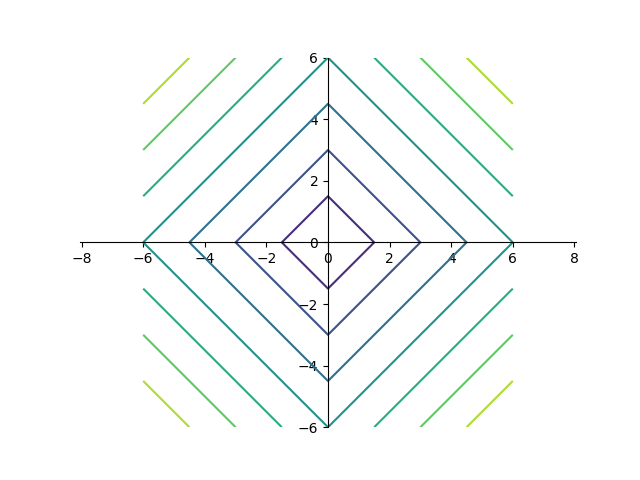

Fig. 9.6 \(\ell_1\) norm for \(\RR^2\). \(\| \cdot \|_1 : \RR^2 \to \RR\) 3D contour plots.#

Fig. 9.7 \(\ell_1\) norm for \(\RR^2\). \(\| \cdot \|_2 : \RR^1 \to \RR\) 2D contour plots.#

9.8.1.7. Max Function#

Example 9.29 (Max function is convex)

Let \(f : \RR^n \to \RR\) be:

with \(\dom f = \RR^n\).

Let \(\bx, \by \in \RR^n\) and \(t \in [0,1]\).

Let \(x = f(\bx) = \max \{ x_1, \dots, x_n \}\) and \(y = f(\by) = \max \{ y_1, \dots, y_n\}\).

Then, for every \(k=1,\dots,n\), we have: \(x_k \leq x\) and \(y_k \leq y\).

Thus, \(t x_k + (1-t) y_k \leq t x + (1-t) y\).

Taking maximum on the left hand side, we obtain:

\[ \max_{k=1}^n (t x_k + (1-t) y_k) \leq t x + (1-t) y. \]But \(f(t \bx + (1-t) \by ) = \max_{k=1}^n (t x_k + (1-t) y_k)\).

Thus, \(f\) satisfies the convexity defining inequality (9.1):

\[ f(t \bx + (1-t) \by ) \leq t f(\bx) + (1-t) f(\by). \]Thus, \(f\) is convex.

9.8.1.8. Geometric Mean#

Example 9.30 (Geometric mean is concave)

Let \(f : \RR^n \to \RR\) be given by:

with \(\dom f = \RR^n_{++}\).

Recall from AM-GM inequality:

Let \(\bx, \by \in \RR^n_{++}\) and \(t \in [0,1]\).

Let \(\bz = t \bx + (1-t) \by\).

Note that \(\bz \in \RR^n_{++}\) too, hence every component of \(\bz\) is positive.

With \(a_i = \frac{x_i}{z_i}\), and applying AM-GM inequality:

\[ \frac{f(\bx)}{f(\bz)} = \left ( \prod_{i=1}^n \frac{x_i}{z_i} \right )^{\frac{1}{n}} \leq \frac{1}{n} \left ( \sum_{i=1}^n \frac{x_i}{z_i} \right ). \]With \(a_i = \frac{y_i}{z_i}\), and applying AM-GM inequality:

\[ \frac{f(\by)}{f(\bz)} = \left ( \prod_{i=1}^n \frac{y_i}{z_i} \right )^{\frac{1}{n}} \leq \frac{1}{n} \left ( \sum_{i=1}^n \frac{y_i}{z_i} \right ). \]Multiplying the first inequality by \(t\) and the second by \((1-t)\) and adding them, we get:

\[ \frac{t f(\bx) + (1-t) f(\by)}{f(\bz)} \leq \frac{1}{n} \left ( \sum_{i=1}^n\frac{t x_i}{z_i} + \frac{(1-t) y_i}{z_i} \right ). \]Recall that \(z_i = t x_i + (1-t) y_i\). Thus, the inequality above simplifies to:

\[\begin{split} &\frac{t f(\bx) + (1-t) f(\by)}{f(\bz)} \leq 1 \\ &\iff t f(\bx) + (1-t) f(\by) \leq f(\bz) = f(t \bx + (1-t) \by). \end{split}\]Thus, \(f\) is concave.

9.8.1.9. Powers#

Example 9.31 (Powers of absolute value)

Let \(f : \RR \to \RR\) be:

with \(\dom f = \RR\).

For \(p=1\), the absolute value function \(f(x) = |x|\) is convex.

Consider the case where \(p > 1\). Let \(q\) be its conjugate exponent given by \(\frac{1}{p} + \frac{1}{q} = 1\).

Let \(x, y \in \RR\) and \(s, t \in (0, 1)\) with \(s + t= 1\).

By triangle inequality:

\[ | s x + t y | \leq s | x| + t |y|. \]We can write this as:

\[ s | x| + t |y| = \left ( s^{\frac{1}{p}} |x| \right ) s^{\frac{1}{q}} + \left ( t^{\frac{1}{p}} |y| \right ) t^{\frac{1}{q}}. \]By Hölder's inequality, for some \(a_1, a_2, b_1, b_2 \geq 0\)

\[ a_1 b_1 + a_2 b_2 \leq (a_1^p + a_2^p)^{\frac{1}{p}} + (b_1^q + b_2^q)^{\frac{1}{q}}. \]Let \(a_1 = s^{\frac{1}{p}} |x|\), \(a_2 = t^{\frac{1}{p}} |y|\), \(b_1 = s^{\frac{1}{q}}\) and \(b_2 = t^{\frac{1}{q}}\).

Applying Hölder’s inequality,

\[ | s x + t y | \leq s | x| + t |y| \leq (s |x|^p + t |y|^p)^{\frac{1}{p}} (s + t)^{\frac{1}{q}} = (s |x|^p + t |y|^p)^{\frac{1}{p}}. \]Taking power of \(p\) on both sides, we get:

\[ | s x + t y |^p \leq s |x|^p + t |y|^p. \]Which is the same as

\[ f(s x + t y) \leq s f(x) + t f(y). \]Thus, \(f\) is convex.

9.8.1.10. Empty Function#

Observation 9.4 (Empty function is convex)

Let \(f: \VV \to \RR\) be such that \(\dom f = \EmptySet\). Then \(f\) is convex.

Proof. The empty set \(\dom f\) is vacuously convex. The defining inequality (9.1) is vacuously true for \(f\) since its domain is empty.

This observation may sound uninteresting however it is important in the algebra of convex functions. Definition 2.48 provides definitions for sum of two partial functions and scalar multiplication with a partial function. If two convex functions \(f\) and \(g\) are such that \(\dom f \cap \dom g = \EmptySet\), then their sum \(f+g\) is empty function. The observation above says that \(f+g\) is still a convex function.

Jensen's inequality, discussed later in the section, generalizes the notion of convexity of a function to arbitrary convex combinations of points in its domain.

9.8.2. Convexity on Lines in Domain#

Theorem 9.70 (\(f\) is convex = \(f\) is convex on lines in domain)

Let \(\VV\) be a real vector space. A function \(f : \VV \to \RR\) is convex if and only if for any \(\bx \in \dom f\) and any \(\bv \in \VV\), the function \(g(t) = f(\bx + t\bv)\) is convex (on its domain , \(\{ t \in \RR \ST \bx + t\bv \in \dom f\}\)).

In other words, \(f\) is convex if and only if it is convex when restricted to any line that intersects its domain.

Proof. For any arbitrary \(f\), and for any

\(\bx \in \dom f, \bv \in \VV\)

we have defined \(g : \RR \to \RR\) as:

with the domain:

Assume \(f\) to be convex. To show that \(g\) is convex, we need to show that \(\dom g\) is convex and \(g\) satisfies the convexity inequality.

Let \(t_1, t_2 \in \dom g\).

It means that there are \(\by = \bx + t_1 \bv \in \dom f\) and \(\bz = \bx + t_2 \bv \in \dom f\),

and \(g(t_1) = f(\by)\) and \(g(t_2) = f(\bz)\).

Let \(r \in [0,1]\) and \(t = rt_1 + (1-r)t_2\).

Note that:

\[\begin{split} \bx + t \bv &= \bx + (rt_1 + (1-r)t_2) \bv\\ &= r\bx + (1-r)\bx + rt_1 \bv + (1-r)t_2 \bv\\ &= r (\bx + t_1 \bv) + (1-r)(\bx + t_2 \bv)\\ &= r \by + (1-r) \bz. \end{split}\]Since \(\dom f\) is convex, hence \(r \by + (1-r) \bz \in \dom f\).

Thus, \(g\) is defined at \(t = rt_1 + (1-r)t_2\) for all \(r \in [0,1]\).

We have shown that if \(t_1, t_2 \in \dom g\), then \(t = rt_1 + (1-r)t_2 \in \dom g\) for all \(r \in [0,1]\).

Thus, \(\dom g\) is convex.

Now,

\[\begin{split} g(t) &= g(r t_1 + (1-r)t_2)\\ &= f(r \by + (1-r) \bz)\\ &\leq r f(\by) + (1-r) f(\bz)\\ &= r g(t_1) + (1-r) g(t_2). \end{split}\]We showed that for any \(t_1, t_2 \in \dom g\) and \(r \in [0,1]\),

\[ g (r t_1 + (1-r) t_2 )\leq r g(t_1) + (1-r) g(t_2). \]Thus, \(g\) is convex.

For the converse, we need to show that if for any \(\bx \in \dom f\) and any \(\bv \in \VV\), \(g\) is convex, then \(f\) is convex.

Assume for contradiction that \(f\) is not convex. Then, either \(\dom f\) is not convex or there exists some \(\bx, \by \in \dom f\) and \(t \in [0,1]\) such that:

We show that both of these conditions lead to contradictions.

Assume \(\dom f\) is not convex.

Then, there exists \(\bx, \by \in \dom f\) such that for some \(t \in [0,1]\):

\[ (1 - t)\bx + t \by \notin \dom f. \]Let \(\bv = \by - \bx\).

Note that \((1 - t)\bx + t \by = \bx + t \bv\).

Picking \(g\) for this particular choice of \(\bx\) and \(\bv\), we note that \(g\) is defined at \(t=0\) and \(t=1\) since they corresponding to the points \(\bx\) and \(\by\) respectively in \(\dom f\).

Since \(g\) is convex by hypothesis, hence, \(g\) is defined at every \(t \in [0,1]\).

This contradicts the fact that \(g\) is not defined at some \(t \in [0,1]\).

Thus, \(\dom f\) must be convex.

We now accept that \(\dom f\) is convex, but there exists some \(\bx, \by \in \dom f\) and \(t \in [0,1]\) such that:

Again, let \(\bv = \by - \bx\) and note that \((1 - t)\bx + t \by = \bx + t \bv\).

Pick \(g\) for this choice of \(\bx\) and \(\bv\).

We have \(g(t) = f(\bx + t \bv)\).

In particular, \(g(0) = f(\bx)\) and \(g(1) = f(\by)\).

Since \(g\) is convex, hence for every \(t \in [0,1]\),

\[\begin{split} f((1-t)\bx + t\by) &= f(\bx + t \bv)\\ &= g(t)\\ &= g ((1-t)0 + t 1)\\ &\leq (1-t)g(0) + t g(1)\\ &= (1-t)f(\bx) + t f(\by). \end{split}\]We have a contradiction.

Thus, \(f\) must be convex.

A good application of this result is in showing the concavity of the log determinant function in Example 9.49 below.

9.8.3. Epigraph#

The epigraph of a function \(f: \VV \to \RR\) is given by:

\(\VV \oplus \RR\) is the direct sum of \(\VV\) and \(\RR\) having appropriate vector space structure.

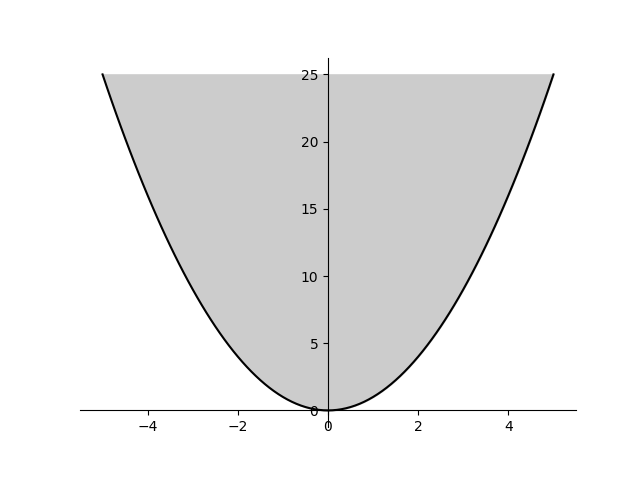

Fig. 9.8 Epigraph of the function \(f(x) = x^2\).#

The definition of epigraph also applies for extended real valued functions \(f: \VV \to \ERL\).

9.8.3.1. Convex Functions#

Theorem 9.71 (Function convexity = Epigraph convexity)

Let \(\VV\) be a real vector space. A function \(f: \VV \to \RR\) is convex if and only if its epigraph \(\epi f\) is a convex set.

This statement is also valid for extended real valued functions.

Proof. Let \(C = \epi f\).

Assume \(f\) is convex.

Let \((\bx_1, t_1), (\bx_2, t_2) \in \epi f\).

Then, \(f(\bx_1) \leq t_1\) and \(f(\bx_2) \leq t_2\).

Let \(r \in [0,1]\).

Consider the point \((\bx, t) = r(\bx_1, t_1) + (1-r)(\bx_2, t_2)\).

We have \(\bx = r \bx_1 + (1 -r)(\bx_2)\).

And \(t = r t_1 + (1-r)t_2\).

Then, \( r f(\bx_1) + (1-r) f(\bx_2) \leq r t_1 + (1-r)t_2 = t\).

Since \(f\) is convex, hence \(f(\bx) = f(r \bx_1 + (1 -r)(\bx_2)) \leq r f(\bx_1) + (1-r)f(\bx_2)\).

But then, \(f(\bx) \leq t\).

Thus, \((\bx, t) \in \epi f\).

Thus, \((\bx_1, t_1), (\bx_2, t_2) \in \epi f\) implies \(r(\bx_1, t_1) + (1-r)(\bx_2, t_2) \in \epi f\) for all \(r \in [0,1]\).

Thus, \(\epi f\) is convex.

Assume \(\epi f\) is convex.

Let \(\bx_1, \bx_2 \in \dom f\).

Let \(t_1 = f(\bx_1)\) and \(t_2 = f(\bx_2)\).

Then, \((\bx_1, t_1), (\bx_2, t_2) \in \epi f\).

Let \(r \in [0,1]\).

Let \(\bx = r \bx_1 + (1-r)\bx_2\).

Since \(\epi f\) is convex, hence \(r(\bx_1, t_1) + (1-r)(\bx_2, t_2) \in \epi f\).

i.e., \((r \bx_1 + (1-r)\bx_2, r t_1 + (1-r)t_2) \in \epi f\).

Thus, \(\bx = r \bx_1 + (1-r)\bx_2 \in \dom f\).

Thus, \(\dom f\) is convex.

And, \(f(\bx) \leq r t_1 + (1-r)t_2\).

But \(r t_1 + (1-r)t_2 = r f(\bx_1) + (1-r)f(\bx_2)\).

Thus, \(f(r \bx_1 + (1-r)\bx_2) \leq r f(\bx_1) + (1-r)f(\bx_2)\).

Thus, \(f\) is convex as \(\bx_1, \bx_2 \in \dom f\) and \(r \in [0,1]\) were arbitrary.

Note that \(\dom f\) is the projection of \(\epi f\) from \(\VV \oplus \RR\) to \(\VV\). Due to Theorem 9.31, \(\epi f\) convex implies \(\dom f\) convex as the projection is a linear operation.

Theorem 9.72 (Convex function from convex set by minimization)

Let \(C\) be a nonempty convex set in \(\VV \oplus \RR\). Let \(f: \VV \to \ERL\) be the function defined by

Then \(f\) is convex.

Proof. We show the convexity of \(f\) by showing the convexity of its epigraph.

Let \((\bx, s)\) and \((\by, t)\) be two points in \(\epi f\).

Then \(f(\bx) \leq s\) and \(f(\by) \leq t\).

By the definition of \(f\) (infimum rule), for every \(k \in \Nat\), there exists \((\bx, s_k) \in C\) such that \(s_k \leq s + \frac{1}{k}\).

Similarly, for every \(k \in \Nat\), there exists \((\by, t_k) \in C\) such that \(t_k \leq t + \frac{1}{k}\).

Consider the sequences \(\{ (\bx, s_k) \}\) and \(\{ (\by, t_k) \}\).

By the convexity of \(C\), for every \(r \in [0,1]\) and every \(k\)

\[ (r \bx + (1 -r) \by, r s_k + (1-r) t_k) \in C. \]Hence for every \(k\)

\[ f(r \bx + (1 -r) \by) \leq r s_k + (1-r) t_k. \]Taking the limit \(k \to \infty\), we have

\[ f(r \bx + (1 -r) \by) \leq r s + (1-r) t. \]Hence \((r \bx + (1 -r) \by, r s + (1-r) t) \in \epi f\) for every \(r \in [0,1]\).

Hence \(\epi f\) is convex.

Hence \(f\) is convex.

9.8.3.2. Nonnegative Homogeneous Functions#

Recall that a real valued function \(f : \VV \to \RR\) is nonnegative homogeneous if for every \(t \in \RR_+\), \(f(t \bx) = t f(\bx)\).

Theorem 9.73 (Nonnegative homogeneity = Epigraph is cone)

A function \(f : \VV \to \RR\) is nonnegative homogeneous if and only if its epigraph \(\epi f\) is a cone in \(\VV \oplus \RR\).

Proof. Let \(f\) be nonnegative homogeneous.

Let \((\bx, f(\bx)) \in \epi f\).

Let \(t \geq 0\).

Then, \(t(\bx, f(\bx)) = (t\bx, t f(\bx)) = (t\bx, f(t\bx))\).

But \((t\bx, f(t\bx)) \in \epi f\) since \(f\) is nonnegative homogeneous.

Thus, \(\VV \oplus \RR\) is closed under nonnegative scalar multiplication.

Thus, \(\epi f\) is a cone.

Assume \(\epi f\) is a cone.

Let \(\bx \in \dom f\).

Then, \((\bx, f(\bx)) \in \epi f\).

Since \((\bzero, 0) \in \epi f\) as \(\epi f\) is a cone, hence \(f(0 \bx) = 0 f(\bx) = 0\).

Now, let \(t > 0\).

Since \(\epi f\) is a cone then, \(t(\bx, f(\bx)) \in \epi f\).

Then, \(t(\bx, f(\bx)) = (t\bx, t f(\bx)) \in \epi f\).

By definition of epigraph, \(f(t\bx) \leq t f(\bx)\).

We claim that \(f(t \bx) = t f(\bx)\) must hold true.

Assume for contradiction that \(f(t \bx) = s f(\bx)\) where \(s < t\).

Then, \((t\bx, s f(\bx)) \in \epi f\).

Since \(\epi f\) is a cone, hence dividing by \(t\), we get, \((\bx, \frac{s}{t} f(\bx)) \in \epi f\).

But then, \(f(\bx) \leq \frac{s}{t} f(\bx) < f(\bx)\) which is a contradiction.

Hence, \(f(t \bx) = t f(\bx)\) must hold true.

9.8.3.3. Nonnegative Homogeneous Convex Functions#

Theorem 9.74 (Nonnegative homogeneous convex function epigraph characterization)

Let \(\VV\) be a real vector space. A real valued function \(f: \VV \to \RR\) is nonnegative homogeneous and convex if and only if its epigraph \(\epi f\) is a convex cone.

Proof. By Theorem 9.73, \(f\) is nonnegative homogeneous if and only if \(\epi f\) is a cone.

By Theorem 9.71, \(f\) is convex if and only if \(\epi f\) is convex.

Thus, \(f\) is nonnegative homogeneous and convex if and only if its epigraph \(\epi f\) is a convex cone.

Theorem 9.75 (Nonnegative homogeneous convex function is subadditive)

Let \(\VV\) be a real vector space. A nonnegative homogeneous function \(f: \VV \to \RR\) is convex if and only if it is subadditive:

Proof. Assume that \(f\) is nonnegative homogeneous and convex.

By Theorem 9.74, \(\epi f\) is a convex cone.

Then, by Theorem 9.47 \(\epi f\) is closed under addition and nonnegative scalar multiplication.

Pick any \(\bx, \by \in \dom f\).

Then \((\bx, f(\bx)), (\by, f(\by)) \in \epi f\).

Then, their sum \((\bx + \by, f(\bx) + f(\by)) \in \epi f\).

This means that \(f(\bx + \by) \leq f(\bx) + f(\by)\) by definition of epigraph.

Now for the converse, assume that \(f\) is nonnegative homogeneous and subadditive.

By Theorem 9.73, \(\epi f\) is a cone.

Consequently, it is closed under nonnegative scalar multiplication.

Pick any \(\bx, \by \in \dom f\).

Let \((\bx, \alpha), (\by, \beta) \in \epi f\).

Then, \(f(\bx) \leq \alpha\) and \(f(\by) \leq \beta\).

Now, \((\bx + \by, f(\bx + \by)) \in \epi f\).

Since \(f\) is subadditive, hence \(f(\bx + \by) \leq f(\bx) + f(\by) \leq \alpha + \beta\).

Thus, \((\bx + \by, \alpha + \beta) \in \epi f\).

We have shown that for any \((\bx, \alpha), (\by, \beta) \in \epi f\), \((\bx + \by, \alpha + \beta) \in \epi f\).

Thus, \(\epi f\) is closed under vector addition.

Since \(\epi f\) is closed under vector addition and nonnegative scalar multiplication, hence \(\epi f\) is a convex cone.

But then, by Theorem 9.74, \(f\) is convex.

Corollary 9.5

Let \(\VV\) be a real vector space. Let \(f: \VV \to \RR\) be a nonnegative homogeneous convex function. Then,

Proof. Since \(f\) is nonnegative homogeneous convex, hence \(f\) is subadditive.

Thus,

But,

Thus,

Thus,

Theorem 9.76 (Linearity of nonnegative homogeneous functions)

Let \(\VV\) be a real vector space. Let \(f: \VV \to \RR\) be a nonnegative homogeneous convex function. Then, \(f\) is linear on a subspace \(L\) of \(\VV\) if and only if \(f(-\bx) = -f(\bx)\) for every \(\bx \in L\).

If \(L\) is finite dimensional, then this is true if merely \(f(-\bb_i) = -f(\bb_i)\) for all the vectors in some basis \(\bb_1, \dots, \bb_n\) for \(L\).

Proof. We are given that \(f\) is nonnegative homogeneous convex.

Assume that \(f\) is linear over \(L\). Then by definition of linearity, \(f(-\bx) = -f(\bx)\) for every \(\bx \in L\).

Now, for the converse, assume that \(f(-\bx) = -f(\bx) \Forall \bx \in L\).

Let \(\bx, \by \in L\).

Then, \(f(\bx + \by) \leq f(\bx) + f(\by)\) since \(f\) is subadditive.

Also,

\[ f(\bx + \by) = -f(-(\bx + \by)) = -f(-\bx + (-\by)). \]But

\[ f(-\bx + (-\by)) \leq f(-\bx) + f(-\by) = -f(\bx) - f(\by) = - (f(\bx) + f(\by)). \]Thus,

\[ f(\bx + \by) = -f(-\bx + (-\by)) \geq f(\bx) + f(\by). \]Combining, we get \(f(\bx + \by) = f(\bx) + f(\by)\). Thus, \(f\) is additive over \(L\).

For any \(t < 0\),

\[ f(t \bx) = - f(-t \bx) = - (-t) f(\bx) = t f(\bx). \]Thus, for any \(t \in \RR\), \(f(t \bx) = t f(\bx)\).

Thus, \(f\) is homogeneous over \(L\).

Since \(f\) is additive and homogeneous over \(L\), hence \(f\) is linear.

Finally, assume that \(L\) is finite dimensional and has a basis \(\bb_1, \dots, \bb_n\).

By previous argument \(f\) is homogeneous on the basis vectors; i.e., \(f(t \bb_i) = t f(\bb_i)\) for any \(t \in \RR\) and any basis vector.

Let \(\bx \in L\).

Then, \(\bx = \sum_{i=1}^n a_i \bb_i\).

Since \(f\) is subadditive, hence

\[\begin{split} f(a_1 \bb_1) + \dots + f(a_n \bb_n) & \geq f(a_1 \bb_1 + \dots + a_n \bb_n)\\ &= f(\bx) \\ &\geq - f(-\bx)\\ &= - f(-a_1 \bb_1 - \dots - a_n \bb_n)\\ &\geq - f(-a_1 \bb_1) - \dots - f(-a_n \bb_n)\\ &=f(a_1 \bb_1) + \dots + f(a_n \bb_n). \end{split}\]This can hold only if all the inequalities are equalities in the previous derivation.

Thus, \(f(\bx) = - f(-\bx)\) or \(-f(\bx) = f(-\bx)\) for every \(\bx \in L\).

Then, following the previous argument, \(f\) is linear over \(L\).

9.8.4. Extended Value Extensions#

Tracking domains of convex functions is difficult. It is often convenient to extend a convex function \(f : \VV \to \RR\) with a domain \(\dom f \subset \VV\) to all of \(\VV\) by defining it to be \(\infty\) outside its domain.

Definition 9.46 (Extended value extension)

The extended value extension of a convex function \(f: \VV \to \RR\) is defined as \(\tilde{f} : \VV \to \ERL\) given by:

We mention that the defining inequality (9.1) for a convex function \(f\) remains valid (rather gets extended) for its extended value extension too. If \(f\) is convex over \(\dom f\), then for any \(\bx_1, \bx_2 \in \VV\), and \(0 < t < 1\), we have

At \(t=0\) and \(t=1\), this is always an equality.

Since \(\dom f\) is convex, hence if both \(\bx_1, \bx_2 \in \dom f\), then \(t \bx_1 + (1-t) \bx_2 \in \dom f\) too.

The inequality then reduces to (9.1).

If either \(\bx_1\) or \(\bx_2\) is not in \(\dom f\), then the R.H.S. becomes \(\infty\) and the inequality stays valid.

Definition 9.47 (Effective domain)

The effective domain of an extended valued function \(\tilde{f} : \VV \to \ERL\) is defined as:

An equivalent way to define the effective domain is:

Theorem 9.77 (Effective domain and sum of functions)

If \(f\) and \(g\) are two extended valued functions, then \(\dom (f + g) = \dom f \cap \dom g\).

Proof. We proceed as follows:

At any \(\bx \in \dom f \cap \dom g\), both \(f(\bx)\) and \(g(\bx)\) are finite.

Thus, \(f(\bx) + g(\bx)\) is finite.

Hence, \(\bx \in \dom (f + g)\).

At any \(\bx \in \VV \setminus (\dom f \cap \dom g)\), either \(f(\bx) = \infty\) or \(g(\bx) = \infty\).

Thus, \((f + g)(\bx) = f(\bx) + g(\bx) = \infty\).

Thus, \(\bx \notin \dom (f + g)\).

Several commonly used convex functions have vastly different domains. If we work with these functions directly, then we have to constantly worry about identifying the domain and manipulating the domain as per the requirements of the operations we are considering. This quickly becomes tedious.

An alternative approach is to work with the extended value extensions of all functions. We don’t have to worry about tracking the function domain. The domain can be identified whenever needed by removing the parts from \(\VV\) where the function goes to \(\infty\).

In this book, unless otherwise specified, we shall assume that the functions are being treated as their extended value extensions.

9.8.5. Proper Functions#

Definition 9.48 (Proper function)

An extended real-valued function \(f : \VV \to \ERL\) is called proper if its domain is nonempty, it never takes the value \(-\infty\) and is not identically equal to \(\infty\).

In other words, \(\epi f\) is nonempty and contains no vertical lines.

Putting another way, a proper function is obtained by taking a real valued function \(f\) defined on a nonempty set \(C \subseteq \VV\) and then extending it to all of \(\VV\) by setting \(f(\bx) = +\infty\) for all \(\bx \notin C\).

It is easy to see that the codomain for a proper function can be changed from \(\ERL\) to \(\RERL\) to clarify that it never takes the value \(-\infty\).

Definition 9.49 (Improper function)

An extended real-valued function \(f : \VV \to \ERL\) is called improper if it is not proper.

For an improper function \(f\):

\(\dom f\) may be empty.

\(f\) might take a value of \(-\infty\) at some \(\bx \in \VV\).

Most of our study is focused on proper functions. However, improper functions sometimes do arise naturally in convex analysis.

Example 9.32 (An improper function)

Consider a function \(f: \RR \to \ERL\) as described below:

Then, \(f\) is an improper function.

9.8.6. Indicator Functions#

Definition 9.50 (Indicator function)

Let \(C \subseteq \VV\). Then, its indicator function is given by \(I_C(\bx) = 0 \Forall \bx \in C\). Here, \(\dom I_C = C\).

The extended value extension of an indicator function is given by:

Theorem 9.78 (Indicator functions and convexity)

An indicator function is convex if and only if its domain is a convex set.

Proof. Let \(C\) be convex. Let \(I_C\) be its indicator function. Let \(\bx, \by \in C\). Then, for any \(t \in [0,1]\)

since \(t \bx + (1-t) \by \in C\) as \(C\) is convex.

Thus, \(I_C\) is convex.

If \(C\) is not convex, then \(\dom I_C\) is not convex. Hence, \(I_C\) is not convex.

Theorem 9.79 (Restricting the domain of a function)

Let \(f\) be a proper function. Then,

Also,

The statement is obvious. And quite powerful.

The problem of minimizing a function \(f\) over a set \(C\) is same as minimizing \(f + I_C\) over \(\VV\).

9.8.7. Sublevel Sets#

Recall from Definition 2.53 that the \(\alpha\)-sublevel set for a real valued function \(f : \VV \to \RR\) is given by

The strict sublevel sets for a real valued function \(f: \VV \to \RR\) can be defined as

The sublevel sets can be shown to be intersection of a set of strict sublevel sets.

Theorem 9.80 (Sublevel set as intersection)

Let \(\VV\) be a real vector space. Let \(f : \VV \to \RR\) be a real valued function. Let

denote the strict sublevel set of \(f\) for \(\alpha\). Let

denote the sublevel set of \(f\) for \(\alpha\). Then,

Proof. We show that \(C_{\alpha} \subseteq \bigcap_{\mu > \alpha} O_{\mu}\).

Let \(\bx \in C_{\alpha}\).

Then, \(f(\bx) \leq \alpha\).

Thus, \(f(\bx) < \mu\) for every \(\mu > \alpha\).

Thus, \(\bx \in O_{\mu}\) for every \(\mu > \alpha\).

Thus, \(C_{\alpha} \subseteq \bigcap_{\mu > \alpha} O_{\mu}\).

We now show that \(C_{\alpha} \supseteq \bigcap_{\mu > \alpha} O_{\mu}\).

Let \(\bx \in \bigcap_{\mu > \alpha} O_{\mu}\).

Then, \(f(\bx) < \mu\) for every \(\mu > \alpha\).

Taking the infimum on the R.H.S. over the set \(\{ \mu \in \RR \ST \mu > \alpha \}\), we obtain

\[ f(\bx) \leq \inf \{ \mu \in \RR \ST \mu > \alpha \} = \alpha. \]Thus, \(\bx \in C_{\alpha}\).

Thus, \(\bigcap_{\mu > \alpha} O_{\mu} \subseteq C_{\alpha}\).

Theorem 9.81 (Convexity of sublevel sets)

If \(f : \VV \to \RR\) is convex, then its sublevel sets given by

are convex.

Proof. Assume \(f\) is convex.

Let \(\bx, \by \in C_{\alpha}\).

Then, \(f(\bx) \leq \alpha\) and \(f(\by) \leq \alpha\).

Let \(t \in [0,1]\).

Let \(\bz = t \bx + (1-t)\by\).

Since \(f\) is convex, hence:

\[ f(\bz) \leq t f(\bx) + (1-t) f(\by) \leq t \alpha + (1-t) \alpha = \alpha. \]Thus, \(f(\bz) \leq \alpha\).

Thus, \(\bz \in C_{\alpha}\).

Thus, \(C_{\alpha}\) is convex.

The converse is not true. A function need not be convex even if all its sublevel sets are convex.

Example 9.33

Consider the function \(f(x) = \ln x\). It is concave (Example 9.45).

Its sublevel sets are convex as they are intervals.

Theorem 9.82 (Convexity of strict sublevel sets)

If \(f : \VV \to \RR\) is convex, then its strict sublevel sets given by

are convex.

Proof. Assume \(f\) is convex.

Let \(\bx, \by \in O_{\alpha}\).

Then, \(f(\bx) < \alpha\) and \(f(\by) < \alpha\).

Let \(t \in (0,1)\).

Let \(\bz = t \bx + (1-t)\by\).

Since \(f\) is convex, hence:

\[ f(\bz) \leq t f(\bx) + (1-t) f(\by) < t \alpha + (1-t) \alpha = \alpha. \]Thus, \(f(\bz) < \alpha\).

Thus, \(\bz \in O_{\alpha}\).

Thus, \(O_{\alpha}\) is convex.

An alternate proof for showing the convexity of the sublevel sets is to show it as an intersection of strict sublevel sets.

Theorem 9.83 (Intersection of sublevel sets of convex functions)

Let \(\VV\) be a real vector space. Let \(I\) be an arbitrary index set. Let \(f_i : \VV \to \RERL\) be convex functions for every \(i \in I\). Let \(\alpha_i \in \RR\) for every \(i \in I\). Then,

is a convex set.

Proof. For each \(i \in I\), consider the set

Then, \(C_i\) is a sublevel set of \(f_i\) which is convex. Hence, \(C_i\) is convex. Now, we can see that

Thus, \(C\) is an intersection of convex sets. Hence, \(C\) is convex.

Example 9.34 (Convexity of sublevel sets of the quadratic)

Let \(\bQ \in \SS^n\) be a positive semidefinite matrix. Let \(\ba \in \RR^n\) and \(c \in \RR\).

Consider the sets of the form

This is a sublevel set of the quadratic function \(f(\bx) = \frac{1}{2} \langle \bx, \bQ \bx \rangle + \langle \bx, \ba \rangle + c\).

Since \(f\) is convex, hence the set is convex.

Sets of this form include the solid ellipsoids, paraboloids as well as spherical balls. Here is an example of the spherical ball of the norm induced by the inner product.

9.8.8. Hypograph#

The hypograph of a function \(f: \VV \to \RR\) is given by:

Just like function convexity is connected to epigraph convexity, similarly function concavity is connected to hypograph convexity.

Theorem 9.84 (Function concavity = Hypograph convexity)

A function \(f\) is concave if and only if its hypograph \(\hypo f\) is a convex set.

Proof. \(f\) is concave if and only if \(-f\) is convex if and only if the epigraph of \(-f\) is convex if and only if the hypograph of \(f\) is convex.

9.8.9. Super-level Sets#

Recall from Definition 2.56 that the \(\alpha\)-sublevel set for a real valued function \(f : \VV \to \RR\) is given by

Theorem 9.85

If \(f : \VV \to \RR\) is concave, then its super-level sets are convex.

Proof. Assume \(f\) is concave.

Let \(\bx, \by \in D_{\alpha}\).

Then, \(f(\bx) \geq \alpha\) and \(f(\by) \geq \alpha\).

Let \(t \in [0,1]\).

Let \(\bz = t \bx + (1-t)\by\).

Since \(f\) is concave, hence:

\[ f(\bz) \geq t f(\bx) + (1-t) f(\by) \geq t \alpha + (1-t) \alpha = \alpha. \]Thus, \(f(\bz) \geq \alpha\).

Thus, \(\bz \in D_{\alpha}\).

Thus, \(D_{\alpha}\) is convex.

The converse is not true. A function need not be concave even if all its super-level sets are convex.

Example 9.35

Let geometric mean be given by:

with \(\dom g = \RR^n_{+}\) (with the interpretation that \(0^{\frac{1}{n}} = 0\)).

Let arithmetic mean be given by:

Consider the set:

\(A\) is the set of nonnegative vectors where the geometric mean of the components is at least \(\alpha\) times larger than the arithmetic mean.

In Example 9.30 we establish that \(g\) is concave.

In Example 9.26 we established that \(a\) is convex as well as concave.

Thus, \(-\alpha a\) is concave.

Thus, \(g - \alpha a\) is concave (with \(\dom g - \alpha a = \RR^n_+\)).

Note that \(A\) can be redefined as:

\[ A = \{ \bx \in \RR^n_+ \ST g(\bx) - \alpha a(\bx) \geq 0 \}. \]Thus, \(A\) is a \(0\)-super-level set of \(g - \alpha a\).

Since \(g - \alpha a\) is concave, hence \(A\) is convex.

9.8.10. Closed Convex Functions#

Recall from Real Valued Functions that a function is closed if all its sublevel sets are closed. A function is closed if and only if its epigraph is closed. A function is closed if and only if it is lower semicontinuous.

In general, if a function is continuous, then it is lower semicontinuous and hence it is closed.

In this subsection, we provide examples of convex functions which are closed.

9.8.10.1. Affine Functions#

Theorem 9.86 (Affine functions are closed)

Let \(f: \VV \to \RR\) be given by

where \(\ba \in \VV^*\) and \(b \in \RR\). Then \(f\) is closed.

Proof. We prove closedness by showing that the epigraph of \(f\) is closed.

Let \(\{ \bx_k, t_k \}\) be a converging sequence of \(\epi f\).

Let \((\bx, t) = \lim_{k \to \infty} (\bx_k, t_k)\).

We have \(f(\bx_k) \leq t_k\) for every \(k\).

In other words

\[ \langle \bx_k, \ba \rangle + b \leq t_k \Forall k \in \Nat. \]Taking the limit on both sides, we get

\[ \langle \bx, \ba \rangle + b \leq t. \]Hence \(f(\bx) \leq t\).

Hence \((\bx, t) \in \epi f\).

Hence \(\epi f\) is closed.

9.8.10.2. Norms#

Theorem 9.87 (All Norms are closed)

Let \(\| \cdot \|: \VV \to \RR\) be a norm on a real vector space \(\VV\). Then \(\| \cdot \|\) is closed.

Proof. The sublevel sets are given by \(S_t = \{ \bx \in \VV \ST \| \bx \| \leq t \}\). They are nothing but the closed balls of radius \(t\) around \(\bzero\) and by definition closed. Hence all sublevel sets are closed. Thus \(\| \cdot \|\) is closed.

9.8.11. Support Functions#

Definition 9.51 (Support function for a set)

Let \(\VV\) be a real inner product space. Let \(C\) be a subset of \(\VV\). The support function \(\sigma_C : \VV \to \RERL\) is defined as

Since \(\VV\) and \(\VV^*\) are isomorphic, support function \(\sigma_C : \VV^* \to \RERL\) for a set \(C \subseteq \VV^*\) is similarly defined as

Example 9.36 (Finite sets)

Let \(C = \{ \bb_1, \dots, \bb_m \}\) be a finite subset of \(\VV\). Then,

This follows directly from the definition.

9.8.11.1. Convexity#

Theorem 9.88 (Convexity of support function)

Let \(\VV\) be a real inner product space. Let \(C\) be a nonempty subset of \(\VV\). Then, the support function \(\sigma_C : \VV \to \RERL\) is convex.

Proof. Fix a \(\by \in C\) and consider the function \(\sigma_{\by} : \VV \to \RR\) given by

\(\sigma_{\by}\) is linear and accordingly convex. Then,

is a pointwise supremum of convex functions. By Theorem 9.114, \(\sigma_C\) is convex.

We note that the convexity of the support function \(\sigma_C\) has nothing to do with the convexity of the underlying set \(C\).

9.8.11.2. Closedness#

Theorem 9.89 (Closedness of support function)

Let \(\VV\) be a real inner product space. Let \(C\) be a nonempty subset of \(\VV\). Then, the support function \(\sigma_C : \VV \to \RERL\) is closed.

Proof. Recall that a function is closed if all its sublevel sets are closed.

Let \(a \in \RR\).

Consider the sublevel set \(S_a = \{ \bx \in \VV \ST \sigma_C(\bx) \leq a \}\).

Then,

\[ S_a = \{ \bx \in \VV \ST \sup_{\by \in C} \langle \bx, \by \rangle \leq a \} \]Thus,

\[ S_a = \{ \bx \in \VV \ST \langle \bx, \by \rangle \leq a \Forall \by \in C \} \]Define \(A_y\) as

\[ A_y = \{ \bx \in \VV \ST \langle \bx, \by \rangle \leq a \}. \]Then,

\[ S_a = \bigcap_{\by \in C} A_y. \]Now, \(A_y\) is a closed set since \(\langle \bx, \by \rangle\) is a continuous function.

Thus, \(S_a\) is an intersection of closed sets.

Thus, \(S_a\) is closed.

Thus, all sublevel sets of \(\sigma_C\) are closed.

Thus, \(\sigma_C\) is closed.

9.8.11.3. Equality of Underlying Sets#

Theorem 9.90 (Equality of underlying sets for support functions)

Let \(A, B \subseteq \VV\) be nonempty, closed and convex sets. Then, \(A = B\) if and only if \(\sigma_A = \sigma_B\).

Proof. If \(A = B\) then obviously \(\sigma_A = \sigma_B\). Now, assume that \(\sigma_A = \sigma_B\).

For contradiction, assume that \(A \neq B\).

Without loss of generality, assume that there exists \(\by \in A\) such that \(\by \notin B\).

Since \(\by \notin B\) and \(B\) is a closed convex set, hence, by Theorem 9.169, there exists a hyperplane \(H\) strongly separating \(\by\) from \(B\).

Thus, there exists \(\bp \in \VV^*\) and \(\alpha \in \RR\) such that

\[ \langle \bx , \bp \rangle \leq \alpha < \langle \by, \bp \rangle \Forall \bx \in B. \]Taking supremum over \(B\) on the L.H.S., we obtain

\[ \sigma_B(\bp) \leq \alpha < \langle \by, \bp \rangle \leq \sigma_A(\bp). \]Thus, there exists \(\bp \in \VV^*\) such that

\[ \sigma_B(\bp) < \sigma_A(\bp). \]This contradicts our hypothesis that \(\sigma_A\) and \(\sigma_B\) are identical.

Thus, \(A = B\) must hold.

9.8.11.4. Closure and Convex Hull#

The next result shows that support function for a set and its closure or its convex hull are identical. This is why, we required \(A,B\) to be closed and convex in the previous result.

Theorem 9.91 (Support functions and closure or convex hull of underlying set)

Let \(A \subseteq \VV\). Then,

\(\sigma_A = \sigma_{\closure A}\).

\(\sigma_A = \sigma_{\convex A}\).

Proof. We first consider the case of closure.

\(A \subseteq \closure A\).

Thus, \(\sigma_A (\by) \leq \sigma_{\closure A}(\by) \Forall \by \in \VV^*\).

Let us now show the reverse inequality.

Let \(\by \in \VV^*\).

Then, there exists a sequence \(\{ \bx_k \}\) of \(\closure A\) such that

\[ \lim_{k \to \infty} \langle \bx_k, \by \rangle = \sigma_{\closure A} (\by). \]Now for every \(\bx_k \in \closure A\), there exists a point \(\bz_k \in A\) such that \(d(\bx_k, \bz_k) \leq \frac{1}{k}\).

Thus, \(\lim_{k \to \infty} (\bz_k - \bx_k) = \bzero\).

Since \(\bz_k \in A\), hence

\[ \sigma_A(\by) \geq \langle \bz_k, \by \rangle = \langle \bx_k, \by \rangle + \langle \bz_k - \bx_k, \by \rangle. \]Taking the limit \(k \to \infty\) on the R.H.S., we obtain

\[ \sigma_A(\by) \geq \sigma_{\closure A}(\by) + 0 = \sigma_{\closure A}(\by). \]Thus, \(\sigma_A(\by) = \sigma_{\closure A}(\by)\) must hold true.

Since this is true for every \(\by \in \VV^*\), hence \(\sigma_A = \sigma_{\closure A}\).

Now, consider the case of convex hull.

By definition, \(A \subseteq \convex A\).

Thus, \(\sigma_A (\by) \leq \sigma_{\convex A}(\by) \Forall \by \in \VV^*\).

Let \(\by \in \VV^*\).

Then, there exists a sequence \(\{ \bx_k \}\) of \(\convex A\) such that

\[ \lim_{k \to \infty} \langle \bx_k, \by \rangle = \sigma_{\convex A} (\by). \]Since \(\bx_k \in \convex A\), hence, there exists \(\bz_1^k, \dots, \bz_{n_k}^k \in A\) and \(\bt^k \in \Delta_{n_k}\) such that

\[ \bx_k = \sum_{i=1}^{n_k} t_i^k \bz_i^k. \]By linearity of the inner product

\[\begin{split} \langle \bx_k, \by \rangle &= \left \langle \sum_{i=1}^{n_k} t_i^k \bz_i^k, \by \right \rangle\\ &= \sum_{i=1}^{n_k} t_i^k \langle \bz_i^k, \by \rangle\\ &\leq \sum_{i=1}^{n_k} t_i^k \sigma_A(\by)\\ &= \sigma_A(\by). \end{split}\]Taking the limit \(k \to \infty\) on the L.H.S., we obtain

\[ \sigma_{\convex A} (\by) = \lim_{k \to \infty} \langle \bx_k, \by \rangle \leq \sigma_A(\by). \]Thus, \(\sigma_A(\by) = \sigma_{\convex A}(\by)\) must hold true.

Since this is true for every \(\by \in \VV^*\), hence \(\sigma_A = \sigma_{\convex A}\).

9.8.11.5. Arithmetic Properties#

Following properties of support functions are useful in several applications.

Theorem 9.92 (Arithmetic properties of support functions)

(Nonnegative homogeneity) For any nonempty set \(C \subseteq \VV\) and a vector \(\bx \in \VV\) and \(t \geq 0\),

\[ \sigma_C (t \bx) = t \sigma_C (\bx). \](Subadditivity) For any nonempty set \(C \subseteq \VV\) and a vector \(\bu, \bv \in \VV\),

\[ \sigma_C(\bu + \bv) \leq \sigma_C (\bu) + \sigma_C (\bv). \](Nonnegative scaling of the underlying set) For any nonempty set \(C \subseteq \VV\) and a vector \(\bx \in \VV\) and \(t \geq 0\)

\[ \sigma_{t C} (\by) = t \sigma_C(\by). \](Additivity over Minkowski sum of sets) For any two nonempty subsets \(A, B \subseteq \VV\) and \(\bx \in \VV\)

\[ \sigma_{A + B} (\bx) = \sigma_A(\bx) + \sigma_B(\bx). \]

Proof. (1) Nonnegative homogeneity

Here, we used the fact that \(\sup\) commutes with nonnegative scalars.

(2) Subadditivity

(3) Nonnegative scaling of the underlying set

(4) Minkowski sum

9.8.11.6. Cones#

Recall from Definition 9.22 that a set \(C\) is called a cone if for every \(\bx \in C\) and every \(t \geq 0\), \(t \bx \in C\). Also, recall from Definition 9.34 that the polar cone of a set \(C\) is given by

Theorem 9.93 (Support function of a cone)

Let \(C\) be a given cone. Then,

In words, the support function of a cone \(C\) is the indicator function of the polar cone of \(C\).

Proof. We proceed as follows

Assume that \(\by \in C^{\circ}\).

Then, \(\langle \bx, \by \rangle \leq 0 \Forall \bx \in C\).

In particular, \(\bzero \in C\) since \(C\) is a cone.

Accordingly, \(\langle \bzero, \by \rangle = 0\).

Thus,

\[ \sigma_C(\by) = \sup_{\bx \in C} \langle \bx, \by \rangle = 0. \]Now consider \(\by \notin C^{\circ}\).

Then, there exists \(\bu \in C\) such that \(\langle \bu, \by \rangle > 0\).

Since \(C\) is a cone, hence \(t \bu \in C\) for all \(t \geq 0\).

Accordingly,

\[ \sigma_C(\by) = \sup_{\bx \in C} \langle \bx, \by \rangle \geq \langle t \bu, \by \rangle \Forall t \geq 0. \]Taking the limit \(t \to \infty\), we see that

\[ \sigma_C(\by) = \infty. \]Thus, \(\sigma_C(\by) = 0\) for all \(\by \in C^{\circ}\) and \(\sigma_C(\by) = \infty\) otherwise.

Thus, \(\sigma_C = I_{C^{\circ}}\).

Example 9.37 (Support function of nonnegative orthant)

Let \(\VV = \RR^n\) and \(C = \RR^n_+\). \(C\) is the nonnegative orthant which is a closed convex cone. Its polar cone is given by

which is the nonpositive orthant \(\{\bx \in \RR^n \ST \bx \preceq \bzero \}\). By Theorem 9.93,

9.8.11.7. Affine Sets#

Theorem 9.94 (Support function for an affine set)

Let \(\BB \in \RR^{m \times n}\) and \(\bb \in \RR^m\). Define the set \(C \subseteq \RR^n\) as

Assume that \(C\) is nonempty and let \(\bx_0 \in C\) be one of the solutions of the system of equations \(\bB \bx = \bb\). Then

Proof. We proceed as follows.

By definition of support function

\[ \sigma_C(\by) = \sup \{ \langle \bx, \by \rangle \ST \bB \bx = \bb \}. \]Introduce a variable \(\bz = \bx - \bx_0\).

Then \(\bx = \bz + \bx_0\).

Accordingly

\[\begin{split} \sigma_C(\by) &= \sup \{ \langle \bz + \bx_0, \by \rangle \ST \bB (\bz + \bx_0) = \bb \} \\ &= \langle \bx_0, \by \rangle + \sup \{ \langle \bz, \by \rangle \ST \bB \bz = \bzero \} \\ &= \langle \bx_0, \by \rangle + \sigma_D (\by) \end{split}\]where \(D = \{\bx \ST \bB \bx = \bzero \}\).

We note that the statement \(\bB \bx = \bzero\) is equivalent to \(\bB \bx \succeq \bzero\) and \(\bB \bx \preceq \bzero\).

In other words, \(D = \{ \bx \in \RR^n \ST \bA \bx \preceq \bzero \}\) where \(\bA = \begin{bmatrix}\bB \\ - \bB \end{bmatrix}\).

The set \(D\) is a convex polyhedral cone.

By Theorem 9.93, the support function of a cone is the indicator function of its polar cone.

By Theorem 9.66, the polar cone is given by

\[ D^{\circ} = \{ \bB^T \bt_1 - \bB^T \bt_2 \ST \bt_1, \bt_2 \succeq \bzero \}. \]It is easy to see that \(D^{\circ} = \range (\bB^T)\).

Every vector \(\bt \in \RR^m\) can be split into two vectors \(\bt_1, \bt_2 \in \RR^m_+\) such that \(\bt = \bt_1 - \bt_2\).

Accordingly \(\bB^T \bt = \bB^T \bt_1 - \bB^T \bt_2\).

This gives us

\[ \sigma_C(\by) = \langle \bx_0, \by \rangle + I_{D^{\circ}} (\by). \]

9.8.11.8. Norm Balls#

Theorem 9.95 (Support functions for unit balls)

Let \(\VV\) be a real vector space endowed with a norm \(\| \cdot \|: \VV \to \RR\). Consider the (closed) unit ball given by

Then, the support function is given by

where \(\| \cdot \|_*: \VV \to \RR\) represents the dual norm.

Proof. This flows directly from the definitions of support function and dual norm.

9.8.12. Gauge Functions#

Definition 9.52 (Gauge function for a set)

Let \(\VV\) be a real vector space. Let \(C\) be a nonempty subset of \(\VV\). The gauge function \(\gamma_C : \VV \to \RERL\) is defined as

If \(\bx \notin r C\) for any \(r \geq 0\), then \(\gamma_C(\bx) = \infty\). This is consistent with the convention that \(\inf \EmptySet = \infty\).

The gauge function is also known as Minkowski functional.

Property 9.33 (Nonnegativity)

The Gauge function is always nonnegative.

Property 9.34 (Value at origin)

Property 9.35 (Subadditive)

If \(C\) is convex, then the gauge function is subadditive.

Proof. We proceed as follows.

If \(\gamma_C (\bx) = \infty\) or \(\gamma_C(\by) = \infty\), then the inequality is satisfied trivially. So assume that both are finite.

Then, the sets \(X = \{r \geq 0 \ST \bx \in r C \}\) and \(Y = \{r \geq 0 \ST \by \in r C \}\) are not empty.

Thus, we can choose some \(s \geq \gamma_C (\bx)\) from \(X\) and \(t \geq \gamma_C(\by)\) from \(Y\).

If \(s=0\) or \(t=0\), then \(\bzero \in C\). Consequently,

\[ \gamma_C (\bx + \by) = \gamma_C (\bx) = \gamma_C (\by) = 0 \leq \gamma_C (\bx) + \gamma_C(\by) \leq s + t. \]and the inequality is satisfied.

Now, consider the case where \(s > 0\) and \(t > 0\).

Then, \(\frac{\bx}{s} \in C\) and \(\frac{\by}{t} \in C\).

Now,

\[ \frac{\bx + \by}{s + t} = \frac{s}{s + t} \frac{\bx}{s} + \frac{t}{s + t}\frac{\by}{t}. \]Thus, \(\frac{\bx + \by}{s + t}\) is a convex combination of \(\frac{\bx}{s}\) and \(\frac{\by}{t}\).

Since \(C\) is convex, hence \(\frac{\bx + \by}{s + t} \in C\).

Thus, \(s + t \in \{ r \geq 0 \ST (\bx + \by) \in rC \}\).

Thus, \(\gamma_C (\bx + \by) \leq s + t\).

Thus, for every \(s \geq \gamma_C (\bx)\) and every \(t \geq \gamma_C(\by)\), \(\gamma_C (\bx + \by) \leq s + t\).

Taking infimum on the R.H.S. over \(s \in X\) and \(t \in Y\), we obtain, \(\gamma_C (\bx + \by) \leq \gamma_C (\bx) + \gamma_C(\by)\).

Property 9.36 (Homogeneous)

The Gauge function is homogeneous.

Property 9.37 (Seminorm)

The Gauge function is a seminorm.

Example 9.38 (Norm as a gauge function)

Let \(\VV\) be a normed linear space with the norm \(\| \cdot \| : \VV \to \RR\).

Let \(\overline{B} = \{\bx \in \VV \ST \| \bx \| \leq 1 \}\) be the unit closed ball.

Then,

The gauge function for the closed unit ball is simply the norm itself.

9.8.13. Jensen’s Inequality#

Jensen’s inequality stated below is another formulation for convex functions.

Theorem 9.96 (Jensen’s inequality)

A proper function \(f: \VV \to \RERL\) is convex if and only if

holds true for every \(\bx_1, \dots, \bx_k \in \VV\) whenever \(t_1, \dots, t_k \geq 0\) and \(t_1 + \dots + t_k = 1\). This inequality is known as the Jensen’s inequality.

Proof. The Jensen’s inequality reduces to (9.1) for \(k=2\). Thus, the statement is true by definition for \(k=2\). For \(k > 2\), we shall present an inductive proof.

Assume \(f\) is convex. Then \(\dom f\) is convex and for all \(\bx_1,\bx_2 \in \dom f\), and \(t \in [0, 1]\), we have:

Let \(\bx_1, \dots, \bx_k \in \VV\).

If any of \(\bx_i \notin \dom f\) for some \(i \in 1,\dots,k\), then \(f(\bx_i) = \infty\) and the Jensen’s inequality holds trivially.

Thus, we shall assume that \(\bx_1, \dots, \bx_k \in \dom f\).

Since \(\dom f\) is convex, hence their convex combination \(t_1 \bx_1 + \dots + t_k \bx_k \in \dom f\).

Inductively, assume that the Jensen’s inequality holds for \(k-1\); i.e.,

\[ f(r_1 \bx_1 + \dots + r_{k-1} \bx_{k-1}) \leq r_1 f(\bx_1) + \dots + r_{k-1} f(\bx_{k-1}) \]holds true whenever \(r_1, \dots, r_{k-1} \geq 0\) and \(r_1 + \dots + r_{k-1} = 1\).

WLOG, assume that \(t_k < 1\). Thus, \(1 - t_k > 0\).

Define \(\by = \sum_{i=1}^{k-1} t'_i \bx_i\) where \(t'_i = \frac{t_i}{1 - t_k}\).

Note that \(t'_i \geq 0\). Also, \(\sum_{i=1}^{k-1} t'_i = 1\) since \(\sum_{i=1}^{k-1} t_i = 1 - t_k\).

We can now write:

\[\begin{split} f(t_1 \bx_1 + \dots + t_k \bx_k) &= f((1 - t_k) \by + t_k \bx_k )\\ &\leq (1 - t_k)f (\by) + t_k f(\bx_k)\\ &= (1 - t_k) f(t'_1 \bx_1 + \dots t'_{k-1} \bx_{k-1}) + t_k f(\bx_k)\\ &\leq (1 - t_k) ( t'_1 f(\bx_1) + \dots + t'_{k-1} f(\bx_{k-1})) + t_k f(\bx_k)\\ &= t_1 f(\bx_1) + \dots + t_{k-1} f(\bx_{k-1}) + t_k f(\bx_k). \end{split}\]Thus, \(f\) satisfies Jensen’s inequality.

For the converse, assume that \(f\) satisfies Jensen’s inequality. Let \(\bx_1, \bx_2 \in \dom f\) and \(t \in [0,1]\). Then, by Jensen’s inequality for \(k=2\),

Thus, \(t \bx_1 + (1-t) \bx_2 \in \dom f\). Thus, \(\dom f\) is convex. Also, \(f\) satisfies (9.1). Hence, \(f\) is convex.

Jensen’s inequality is essential in proving a number of famous inequalities.

Example 9.39 (Logarithm and Jensen’s inequality)

In Example 9.45, we show that \(\ln(x)\) is concave. Consequently, \(-\ln(x)\) is convex.

Now, let \(x_1, \dots, x_n \in \RR_{++}\) be positive real numbers and let \(t_1, \dots, t_n \geq 0\) such that \(t_1 + \dots + t_n = 1\). Then, by Jensen’s inequality

Multiplying by \(-1\) and taking exponential on both sides, we obtain

For a particular choice of \(t_1 = \dots = t_n = \frac{1}{n}\), we obtain

which is the AM-GM inequality suggesting that arithmetic mean is greater than or equal to the geometric mean for a group of positive real numbers.

Theorem 9.97 (Jensen’s inequality for nonnegative homogeneous convex functions)

If \(f: \VV \to \RERL\) is a nonnegative homogeneous proper convex function, then

holds true for every \(\bx_1, \dots, \bx_k \in \VV\) whenever \(t_1, \dots, t_k \geq 0\).

Proof. Let \(\bx_1, \dots, \bx_k \in \VV\). If any of \(\bx_i \notin \dom f\) for some \(i \in 1,\dots,k\), then \(f(\bx_i) = \infty\) and the inequality holds trivially. Thus, we shall assume that \(\bx_1, \dots, \bx_k \in \dom f\).

By Theorem 9.75, \(f\) is subadditive. Thus,

The nonnegative homogeneity gives us

We are done.

9.8.14. Quasi-Convex Functions#

Definition 9.53 (Quasi convex function)

Let \(\VV\) be a real vector space. Let \(f : \VV \to \RR\) be a real valued function. Let the sublevel sets of \(f\) be given by

If the sublevel sets \(C_{\alpha}\) of \(f\) are convex for every \(\alpha \in \RR\), then \(f\) is called a quasi-convex function.